Project Management With RStudio

Overview

Teaching: 15 min

Exercises: 30 minQuestions

How can I manage my projects in R?

Objectives

Create self-contained projects in RStudio

Introduction

The scientific process is naturally incremental, and many projects start life as random notes, some code, then a manuscript, and eventually everything is a bit mixed together.

Managing your projects in a reproducible fashion doesn't just make your science reproducible, it makes your life easier.

— Vince Buffalo (@vsbuffalo) April 15, 2013

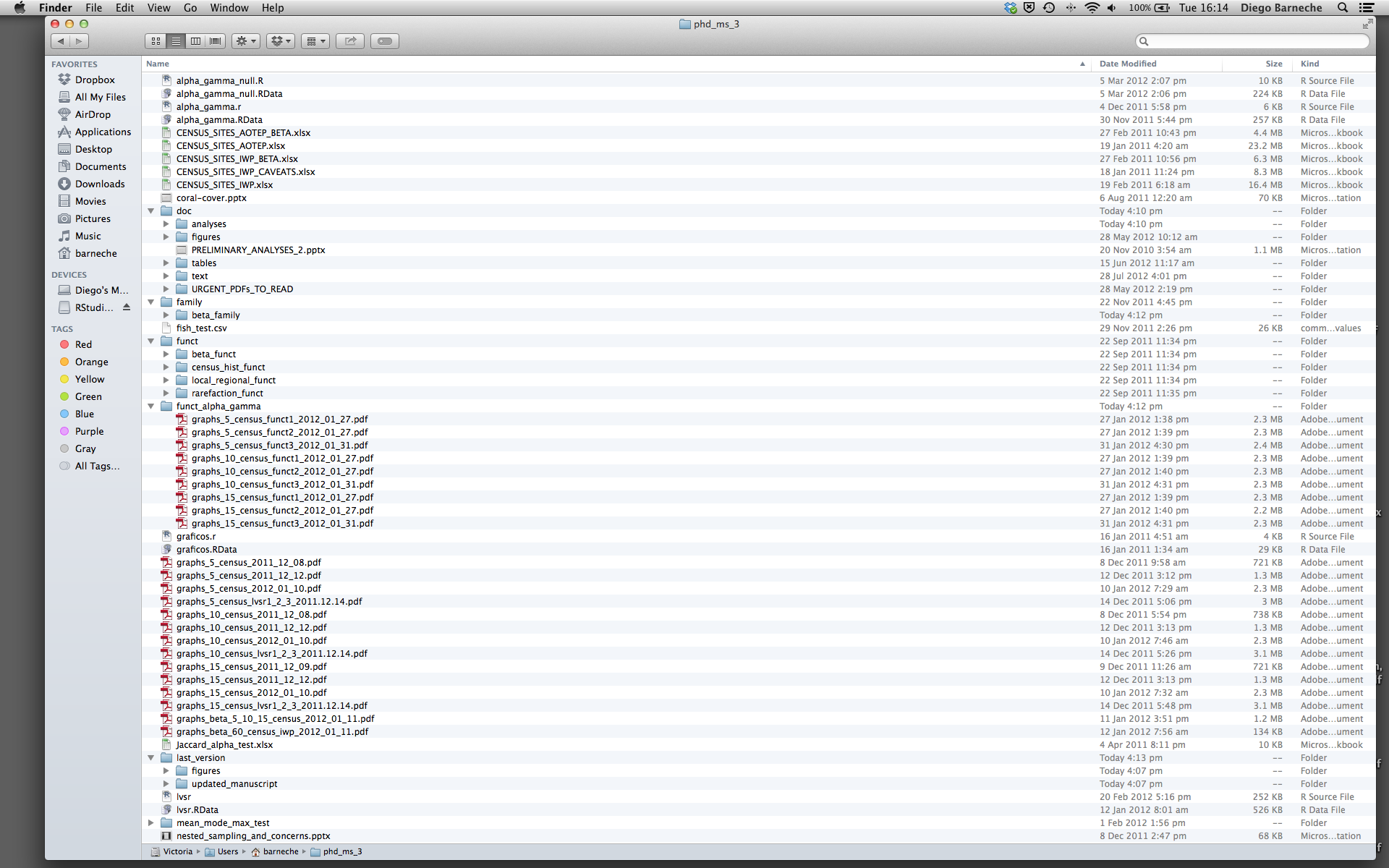

Most people tend to organize their projects like this:

There are many reasons why we should ALWAYS avoid this:

- It is really hard to tell which version of your data is the original and which is the modified;

- It gets really messy because it mixes files with various extensions together;

- It probably takes you a lot of time to actually find things, and relate the correct figures to the exact code that has been used to generate it;

A good project layout will ultimately make your life easier:

- It will help ensure the integrity of your data;

- It makes it simpler to share your code with someone else (a lab-mate, collaborator, or supervisor);

- It allows you to easily upload your code with your manuscript submission;

- It makes it easier to pick the project back up after a break.

A possible solution

Fortunately, there are tools and packages which can help you manage your work effectively.

One of the most powerful and useful aspects of RStudio is its project management functionality. We’ll be using this today to create a self-contained, reproducible project.

Challenge: Creating a self-contained project

We’re going to create a new project in RStudio:

- Click the “File” menu button, then “New Project”.

- Click “New Directory”.

- Click “Empty Project”.

- Type in the name of the directory to store your project, e.g. “my_project”.

- If available, select the check-box for “Create a git repository.”

- Click the “Create Project” button.

Now when we start R in this project directory, or open this project with RStudio, all of our work on this project will be entirely self-contained in this directory.

Best practices for project organization

Although there is no “best” way to lay out a project, there are some general principles to adhere to that will make project management easier:

Treat data as read only

This is probably the most important goal of setting up a project. Data is typically time consuming and/or expensive to collect. Working with them interactively (e.g., in Excel) where they can be modified means you are never sure of where the data came from, or how it has been modified since collection. It is therefore a good idea to treat your data as “read-only”.

Data Cleaning

In many cases your data will be “dirty”: it will need significant pre-processing to get into a format R (or any other programming language) will find useful. This task is sometimes called “data munging”. I find it useful to store these scripts in a separate folder, and create a second “read-only” data folder to hold the “cleaned” data sets.

Treat generated output as disposable

Anything generated by your scripts should be treated as disposable: it should all be able to be regenerated from your scripts.

There are lots of different ways to manage this output. I find it useful to have an output folder with different sub-directories for each separate analysis. This makes it easier later, as many of my analyses are exploratory and don’t end up being used in the final project, and some of the analyses get shared between projects.

Tip: Good Enough Practices for Scientific Computing

Good Enough Practices for Scientific Computing gives the following recommendations for project organization:

- Put each project in its own directory, which is named after the project.

- Put text documents associated with the project in the

docdirectory.- Put raw data and metadata in the

datadirectory, and files generated during cleanup and analysis in aresultsdirectory.- Put source for the project’s scripts and programs in the

srcdirectory, and programs brought in from elsewhere or compiled locally in thebindirectory.- Name all files to reflect their content or function.

Tip: ProjectTemplate - a possible solution

One way to automate the management of projects is to install the third-party package,

ProjectTemplate. This package will set up an ideal directory structure for project management. This is very useful as it enables you to have your analysis pipeline/workflow organized and structured. Together with the default RStudio project functionality and Git you will be able to keep track of your work as well as be able to share your work with collaborators.

- Install

ProjectTemplate.- Load the library

- Initialize the project:

install.packages("ProjectTemplate") library("ProjectTemplate") create.project("../my_project", merge.strategy = "allow.non.conflict")For more information on ProjectTemplate and its functionality visit the home page ProjectTemplate

Separate function definition and application

One of the more effective ways to work with R is to start by writing the code you want to run directly in an .R script, and then running the selected lines (either using the keyboard shortcuts in RStudio or clicking the “Run” button) in the interactive R console.

When your project is in its early stages, the initial .R script file usually contains many lines of directly executed code. As it matures, reusable chunks get pulled into their own functions. It’s a good idea to separate these functions into two separate folders; one to store useful functions that you’ll reuse across analyses and projects, and one to store the analysis scripts.

Tip: avoiding duplication

You may find yourself using data or analysis scripts across several projects. Typically you want to avoid duplication to save space and avoid having to make updates to code in multiple places.

In this case I find it useful to make “symbolic links”, which are essentially shortcuts to files somewhere else on a filesystem. On Linux and OS X you can use the

ln -scommand, and on Windows you can either create a shortcut or use themklinkcommand from the windows terminal.

Save the data in the data directory

Now we have a good directory structure we will now place/save the data file in the data/ directory.

Challenge 1

Download the gapminder data from here.

- Download the file (CTRL + S, right mouse click -> “Save as”, or File -> “Save page as”)

- Make sure it’s saved under the name

gapminder-FiveYearData.csv- Save the file in the

data/folder within your project.We will load and inspect these data later.

Challenge 2

It is useful to get some general idea about the dataset, directly from the command line, before loading it into R. Understanding the dataset better will come in handy when making decisions on how to load it in R. Use the command-line shell to answer the following questions:

- What is the size of the file?

- How many rows of data does it contain?

- What kinds of values are stored in this file?

Solution to Challenge 2

By running these commands in the shell:

ls -lh data/gapminder-FiveYearData.csv-rw-rw-r-- 1 ai ai 80K april 16 21:16 data/gapminder-FiveYearData.csvThe file size is 80K.

wc -l data/gapminder-FiveYearData.csv1705 data/gapminder-FiveYearData.csvThere are 1705 lines. The data looks like:

head data/gapminder-FiveYearData.csvcountry,year,pop,continent,lifeExp,gdpPercap Afghanistan,1952,8425333,Asia,28.801,779.4453145 Afghanistan,1957,9240934,Asia,30.332,820.8530296 Afghanistan,1962,10267083,Asia,31.997,853.10071 Afghanistan,1967,11537966,Asia,34.02,836.1971382 Afghanistan,1972,13079460,Asia,36.088,739.9811058 Afghanistan,1977,14880372,Asia,38.438,786.11336 Afghanistan,1982,12881816,Asia,39.854,978.0114388 Afghanistan,1987,13867957,Asia,40.822,852.3959448 Afghanistan,1992,16317921,Asia,41.674,649.3413952

Tip: command line in R Studio

You can quickly open up a shell in RStudio using the Tools -> Shell… menu item.

Version Control

It is important to use version control with projects. Go here for a good lesson which describes using Git with R Studio.

Key Points

Use RStudio to create and manage projects with consistent layout.

Treat raw data as read-only.

Treat generated output as disposable.

Separate function definition and application.